Every new marketing model seems to move farther from a fundamental truth – there’s a human on the other side of the screen.

We can sense this growing chasm in marketing's increasingly de-humanized vocabulary – addressable markets, cohorts, targets, segments; we can sense it in blunt, one-size-fits-all multicultural definitions and generational tags.

Despite being awash in data and analytics that tell us what customers did, most companies don’t fully understand why their customers behave the way they do.

A Harvard Business Review Analytical Services study found that just 23% of executives believe their organization understands their customers’ motivations. (Even if this has doubled since 2019 that's still not great.)

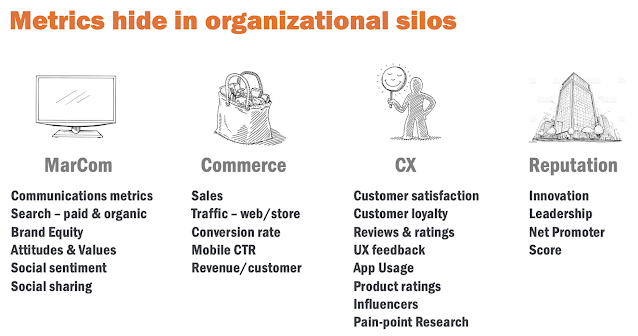

Customer Experience, or CX, comes closest to embracing a human-centered truth. Yet here we are in 2023 and CX remains siloed in many organizations.

Being human-centered is not about going analog. Far from it! Data and marketing technologies have given us superpowers in our ability to be more relevant and personalized.

This is about shifting our mindset – making empathy a core skill; designing more human-centered ways to organize teams; truly understanding what it means to have a relationship with a customer.

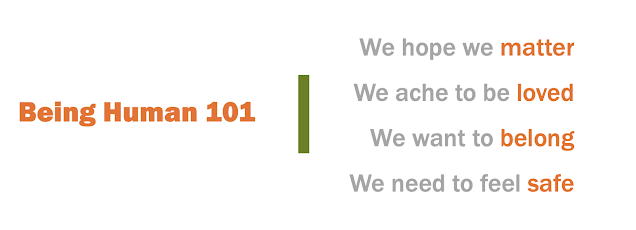

Being Human: 101

In my recent university lectures, I speak to students about human behavior and the power of empathy. With a clear nod to Abraham Maslow, here’s how I summarize for them our basic human motivations:

We create relationships as a way to help satisfy these needs, and the strongest begin are sustained through empathy – the ability to see through the eyes of another, without judgement. Empathy helps form meaningful relationships because it builds trust.

If this is true in life, why isn’t this consistently true in marketing?

Prioritizing the “R” in CRM

Several years ago I realized the mistake I had long been making.

While giving a presentation I noticed I was repeating the word relationship on slide after power-pointy slide, e.g., customer relationship, brand relationship, CRM. I hadn't yet invested time to unpack this simple word to understand the dynamics of real relationships.

That “ah-ha moment” helped crystalize a simple truth: what’s true in life should be true in marketing – how we form personal relationships should guide how we form customer relationships.

And that truth ultimately inspired Rehumanize, a marketing consultancy that helps organizations and people grow by harnessing the power of empathy and human relationships.

Rehumanize organizes goals, metrics, and teams around the four dynamics of human relationships – empathy, experiences, endorsement, and energy – aka, "The 4Es."

Connecting the Dots

Rehumanize's 4E Loyalty Model represents System Thinking – i.e., a way to make sense of complexity by viewing a problem in terms of wholes and relationships rather than its parts. In this case, the "whole" is the dynamics of human relationships.

This is what differentiates the 4E model from CX and CRM. Unlike those strategies, 4E integrates the contributions of other disciplines such as media communications, social media, event marketing, influencer strategies, product development, etc.

4E connects the dots across the enterprise – the benefit of systems thinking – to create a human-centered model to organize and analyze data, plan and prioritize strategies, and foster collaboration across teams.

Designing a human-centered organization sounds daunting, yet the necessary data, resources and skills likely already exist in your company but are disconnected from each other.

The 4E Loyalty Model is underpinned by the metrics we use every day, but live in different organizational silos, scattered across disparate reports, discussed in separate meetings.

When seen through the 4Es, these metrics realign to paint a clear picture of the opportunities, progress, and work to be done.

Teams in Marketing, Behavioral Analytics, UX/CX, Customer Care, Product and Corporate Comms all contribute. 4E creates a common vocabulary, shared planning model, and aligned KPIs.

Empathy is a business strategy

Empathy may sound soft, but 4E is a measurable, outcome-oriented system that align goals, analytics and action.

Empathy helps accelerate progress on DEI initiatives; it can build authentic cultural relevance and growth among diverse customers; it can strengthen the effectiveness of a company’s CRM investments; it helps inspire new product development.

Spiderman and Maya Angelou agree.

While it’s true that Big Data and Martech have given us marketing superpowers, it’s also true that “with great power comes great responsibility” (as Uncle Ben advised Peter Parker, aka Spiderman).

Our responsibility is to use human insights, data and technology to better serve customers – to help organizations and people grow by harnessing the power of empathy and human relationships.

Marketing leaders have always had to hold in their mind two seemingly contradictory ideas: Marketing is about customer empathy; marketing is about profitable growth.

Each statement is true. Each is less effective without the other.

And here, I step aside and let Maya Angelou summarize my 29 paragraphs in 22 words.